Rapid model building as it fits the dataset quickly. Much less likely to be affected by outliers Highly prone to being affected by outliers There is a reduced risk of overfitting, because of the multiple trees There is a possibility of overfitting of data It cannot handle data with linear patterns

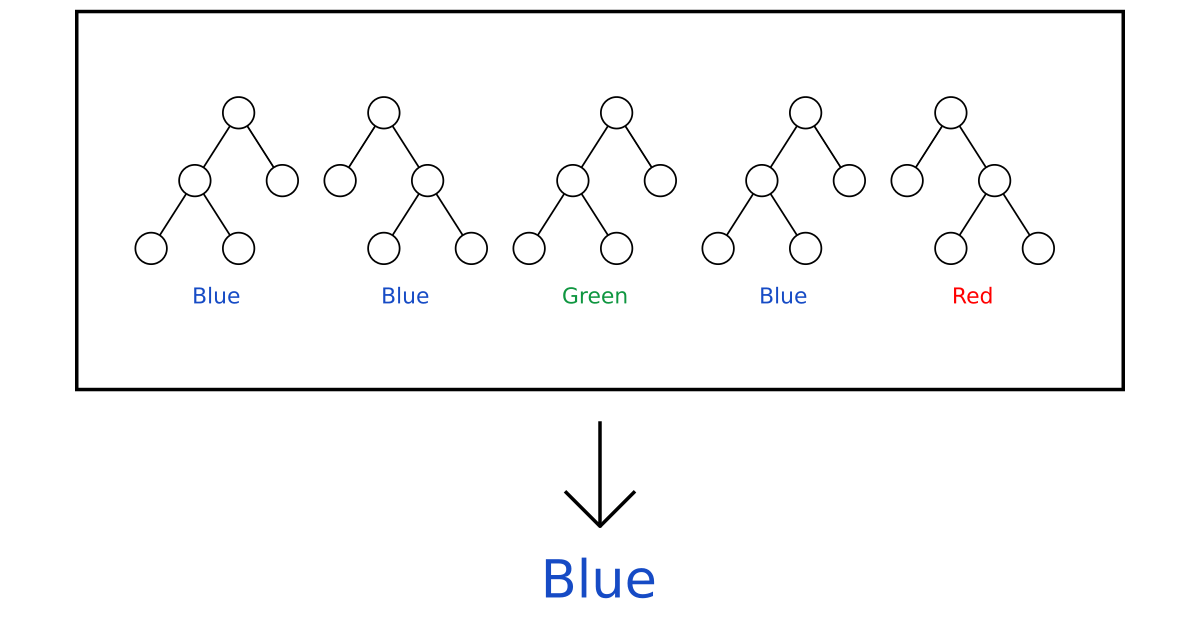

It is best to build solutions for linear patterns of data It is worse for handling data with high dimensionĭifference Between Decision Tree and Random Forest.It cannot be used for linear patterns of data.The major drawback of the random forest model is its complicated structure due to the grouping of multiple decision trees.The algorithm adapts quickly to the dataset.The random forest algorithm is highly accurate and powerful.After that output from each tree is analyzed with a predictive system to find the desired result. We have to build separate decision trees for each option A, B, C, etc., in the same way, we have built a single decision tree. So in this case we have to build a decision tree for each case and group them together to find the best option. If you are planning to buy a new house and you have multiple options like A, B, C, and so on. Let us take an example to understand the working of a random forest algorithm in a better way. Since multiple decision trees are grouped to build the random forest it is more complicated. As the name suggests forest may contain a group of trees the algorithm contains a collection of decision trees.

Hence we can say it is a collection of decision trees. Random forest is a supervised learning algorithm in which a group of decisions is made and the result is finalized based on the majority.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed